- Home

- About

- Contact

- Blog

- Multiarm bandit games

- Dell optiplex 210l ram

- Will microsoft office 2002 work on windows 7

- English rtp rpg maker 2000

- Magic knight rayearth manga tvtropes

- Terramodel manual

- The sims 4 nude mod that you can be naked everywhere

- Telugu hero suman son

- Sad piano chords sheet music

- 40k sentinels of terra

- Armadillo lizard sale

- Realtek 11n usb wireless lan utility windows 7 driver

- Sleeping dogs game

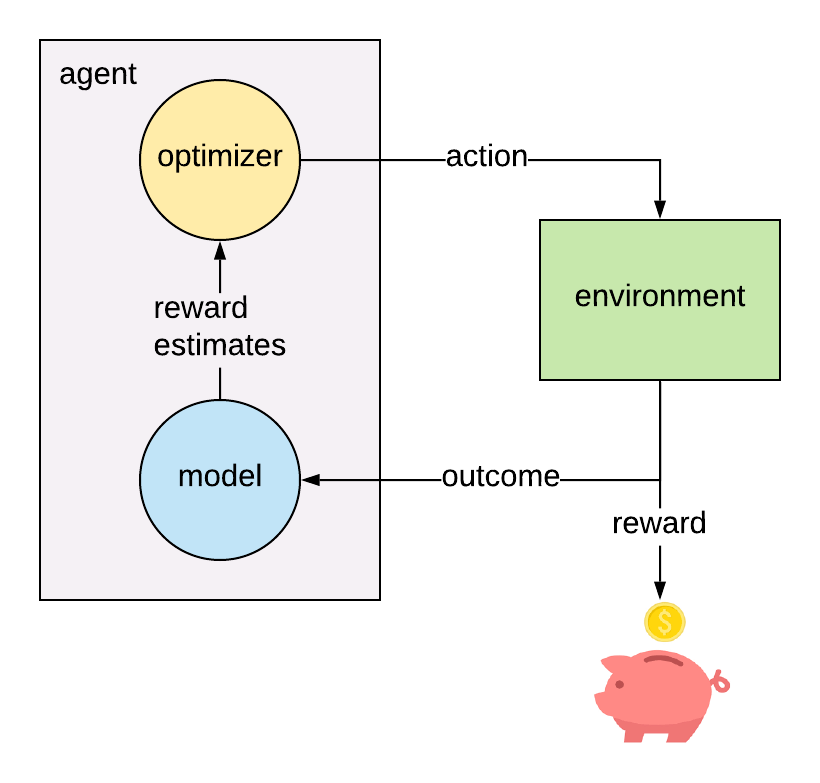

Since those variants consider all paths through the tree, you don't have an explore/exploit tradeoff anymore, as you normally would in RL. However, it doesn't converge nearly as quickly as other variants, like Vanilla (explore the whole tree on each iteration) or Chance Sampling (sample the cards, explore the whole action space).

The most sampling-heavy variant, called Outcome Sampling, traces a single path through the tree, as you would in RL. CFR has many variants, many of which focus on using sampling to trade off between fast, narrow, noisy updates versus slow, broad, precise updates. If you have an RL background, then CFR is kind of "RL using an advantage function, but it converges in adversarial games." If you have a multiarm bandits background, then CFR is kind of "An independent multiarm bandit at each decision point, all learning at the same time."ĬFR differs from standard RL in a few ways:Įxploring the tree. It is related to RL, and there are a few ways of interpreting CFR. Like I mentioned in a later post, I'm an author on several of the CFR papers. Discussion of underlying philosophical issues like Newcomb's dilemma is permitted (but try to not be tedious). Goals include cost-benefit analyses (calculating expected utility of specific choices), defining relevant loss functions, the value of perfect data and the optimal amount of data to gather, balancing taking (estimated) optimal actions with learning about other suboptimal actions, inferring causal mechanisms in an environment, eliciting expert beliefs for priors, and examining sensitivity of conclusions about decisions to the data or modeling choices. It can be applied to many areas such as economics, medicine, finance, and business, and draws heavily on Bayesian statistics, meta-analysis, optimization, POMDPs, reinforcement learning, causal modeling, game theory, and operations research. Journal of Economic Literature Classification Numbers: C73 and C83.Statistical decision theory is concerned with making optimal decisions under statistical uncertainty, often maximizing expected utility. We apply this approach to some well known examples including single- and multi-person, multi-arm bandit games and repeated Cournot oligopoly games. When playing a subjective game repeatedly, subjective optimizers converge to a subjective equilibrium. The proposed notions of subjective games and of subjective Nash and correlated equilibria replace essential but unavailable objective knowledge by subjective assessments. Journal of Economic Literature Classification Numbers: C73 and C83.ĪB - Applying the concepts of Nash, Bayesian, and correlated equilibria to the analysis of strategic interaction requires that players possess objective knowledge of the game and opponents' strategies.

N2 - Applying the concepts of Nash, Bayesian, and correlated equilibria to the analysis of strategic interaction requires that players possess objective knowledge of the game and opponents' strategies. This paper is an extended version of "Bounded Learning Leads to Correlated Equilibrium" (see Kalai and Lehrer, 1991). The research was supported by NSF Economics Grants SES-9022305 and SBR-9223156 and by the Division of Humanities and Social Sciences of the California Institute of Technology. * The authors acknowledge valuable communications with Andreas Blume, Eddie Dekel-Tabak, ltzhak Gilboa, David Kreps, Sylvain Sorin, as well as participants in the 1993 Summer in Tel Aviv Workshop and in seminars at the University of California, San Diego the California Institute of Technology and the University of Chicago.